Introduction

After reading this excellent post by Gunter Grodotzki, I finally got my act together to write this post in which I want to broadly cover the following topics:

- Database deployments: what, how and why

- How we used database deployments in our project

- What value can be obtained from database deployments

In a lot of the teams I have worked in, continuous integration (CI) and continuous deployment (CD) is generally considered a standard practice for the code base. However if there is a database involved, not a lot of thought is given to how it should form part of the DevOps lifecycle. Deployment of code is generally an easier problem to solve due to the lack of state involved. However since the database is usually considered the source of truth, database deployment is a key aspect of any deployment process and we need to make sure that the current state in each environment is preserved when migrating changes.

The starting step to automating your database (DB) deployments is to treat your database schema and any associated seed data the same way as your treat the application - as code that needs to be versioned. Once you have your DB changes or migrations in a version control system you can keep them in step with your application in a more controlled and manageable fashion. You will also need to decide which approach to use for database delivery: State or Migration based. Both have their place, but in this post I will be focussing on a migration based approach.

The actual execution of the deployment of your database can use various methods from manual scripts to auto generated updates to full deployment packages. You will probably find that over the lifetime of the project you will change the methods you use as you attempt to automate more of your pipeline.

Having a version controlled and repeatable DB delivery process is a key part of minimising potential bugs in the application, and it will allow future developers the ability to revert to any particular version of the database either to fix a bug or verify some behaviour.

Our Development Process

When writing database access code, any team can choose to use a shared development database or have each developer use a local copy on their machine. The former option is great if you have a dedicated database administrator (DBA) who can manage and synchronize all changes. The latter option allows a higher degree of isolation and is usually faster to load data, but can only work if the developer can install the database and related services locally. This may not always be possible due to licencing, application versions/dependencies or the size of the database. In our case we are able to develop locally so each developer can work completely independently.

This independance comes at a price however, especially if you use an EDMX file to manage your database. An EDMX file is an xml file that contains all the database modelling information, and although it is not a binary file, mergeing an EDMX file is very hard to do correctly and consistently. Therefore the general rule of thumb for our team is for each developer to ensure all migrations scripts had been run on the database before updating the EDMX and then to always use the remote changes when pulling updates from source control repository.

Our method of manually writing and executing scripts was getting tiresome and becoming more error prone as the team got larger and we added more functionality. We needed a better method to reduce the number of manual steps. Enter DbUp.

Adding in DbUp

A colleague of mine had used DbUp before and suggested we try it. The library is a great little dll that you can integrate into your project in any manner you see fit. We have wrapped the DbUp dll with some utility functions, so that we can migrate multiple databases in each environment (in our case, the main DB and a logging DB) and so that all scripts are stored in a central project for easy management.

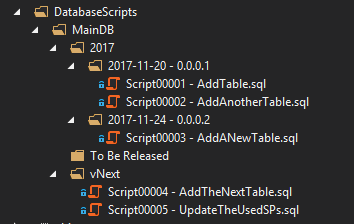

In our DB migration project we keep all scripts for each database we are targeting in a single folder called vNext. As we make a release into production we move all those scripts into a dated and versioned folder for easier management for the developers. We use the

To Be Released folder as an intermediate location for all scripts that have not been released, but we are not currently working on.

However DbUp only reads scripts in the top level directory, if you are using the external scripts option. To handle this, we have an MSBuild task that transforms the scripts from a project hierarchical folder structure into a flat folder structure. In other words, all scripts are dumped into the root folder in the deployment package, while the source project remains untouched.

This MSBuild task is shown below:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

<Target Name="CopySqlScripts" AfterTargets="Build">

<Message Text="***** DBMigrate: Start Copy Sql Scripts *****" Importance="High" />

<ItemGroup>

<MainDBScriptFiles Include="$(ProjectDir)\DatabaseScripts\MainDB\**\*.*" />

</ItemGroup>

<PropertyGroup>

<DBScriptsOutput>$(TargetDir)SqlScripts</DBScriptsOutput>

</PropertyGroup>

<Message Text="Clearing output folder: $(DBScriptsOutput)" Importance="High" />

<RemoveDir Directories="$(DBScriptsOutput)" />

<Message Text="Copying SQL files to output folder..." Importance="High" />

<Copy SourceFiles="@(MainDBScriptFiles)" DestinationFiles="@(MainDBScriptFiles->'$(DBScriptsOutput)\MainDB\%(Filename)%(Extension)')" SkipUnchangedFiles="false" />

<Message Text="***** DBMigrate: Finish Copy Sql Scripts *****" Importance="High" />

</Target>

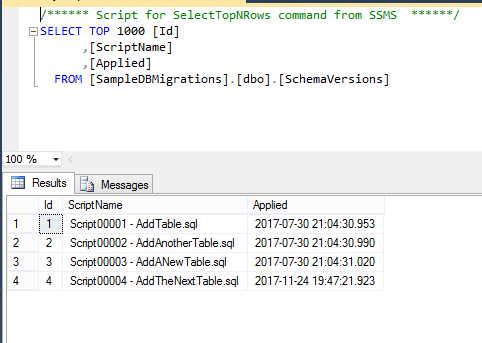

To track what scripts have been run against the database, DbUp creates a new table in database (schema and name can be customized) and inserts the name of the script and date applied into this table.

Another option instead of reading the scripts from the file system is to package them into the consuming dll as an embedded resource. The advantage of embedding the scripts means they cannot be tampered with at runtime, and script integrity is practically guaranteed. However the embedded file name contains the full file path of the script location in the project and so the project folder name cannot be changed once the script has been applied to a database. Moving the file in the source project will cause the folder name/file name to change in the complied binary and hence DbUp will think it is a new script to be applied it in the next migration. If your scripts are not idempotent or you only expect your scripts to run once, this will give you many headaches. For this reason we opted to read the scripts from the file system, so as to allow us to manage the scripts in the source folder in a more flexible manner.

Our Migration Process

We have integrated DbUp in 2 ways: a manually executed process triggered by the developer and an automatic process baked into the web site application start. The manual process called from an executable allows the developer to bring their local database up to date with all the changes without running the website. This same executable is also part of the build and deployment process and is bundled with the deployment package.

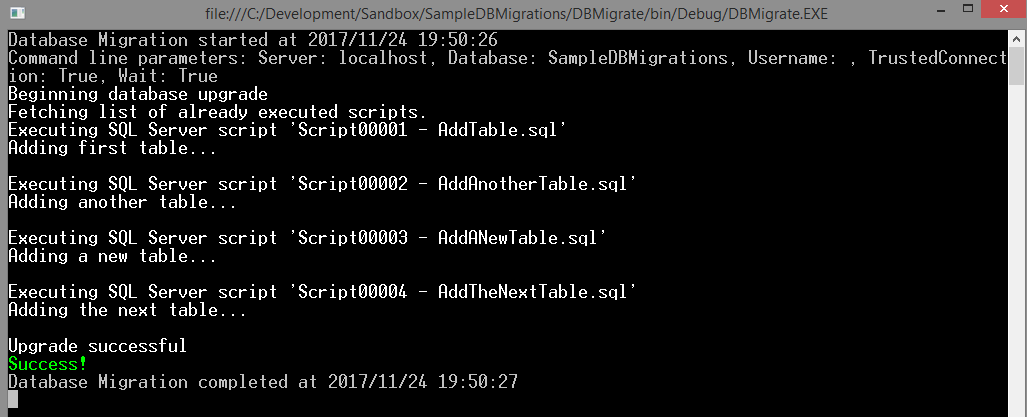

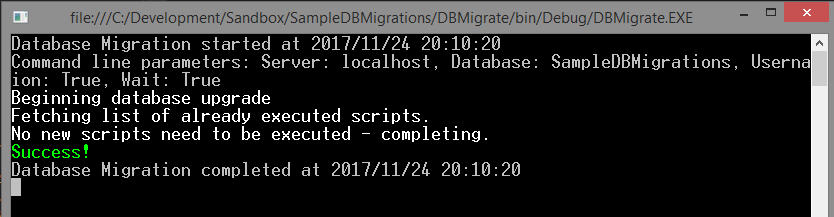

Running the DB Migrate exe looks like this:

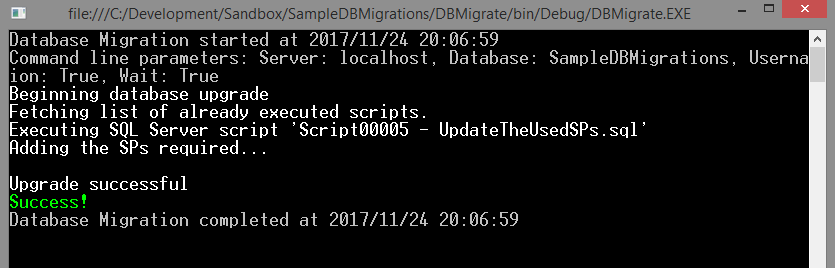

After adding more scripts, only the new ones are run:

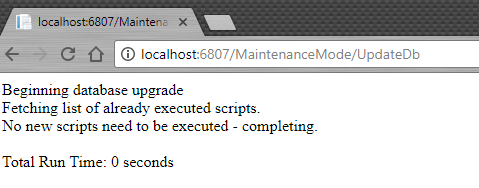

Repeatedly running the exe will not change anything:

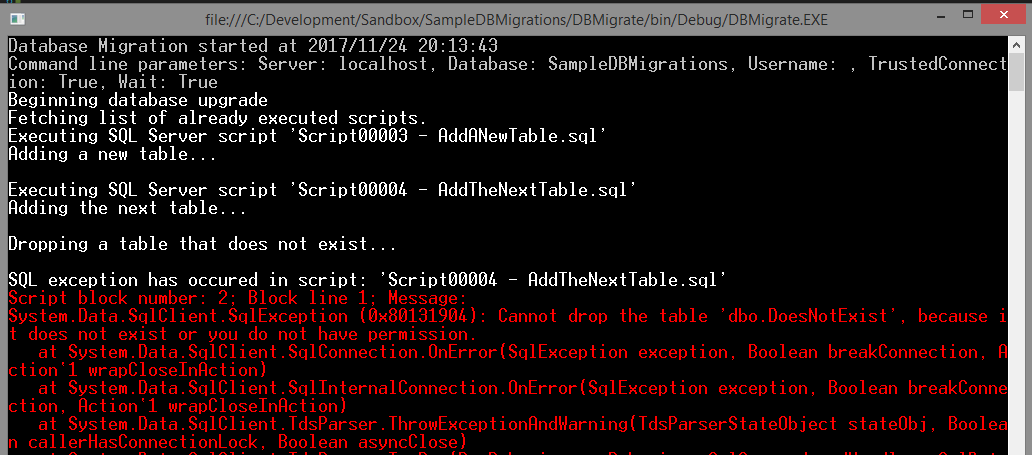

If a script fails, then DbUp reports the error and exits. No further scripts are run. The script that failed is not inserted into the database, so you can fix it and retry.

DbUp will automatically run from the next script after the last successfully saved entry in the database. For example, if scripts 1, 2 and 3 need to be run, and 2 fails, then after correction of the script only scripts 2 and 3 will be executed.

The problems with managing the EDMX still remain, but the use of the executable that runs DbUp ensures that each developer has all the latest changes applied in a consistent manner before they attempt to rebuild the EDMX file.

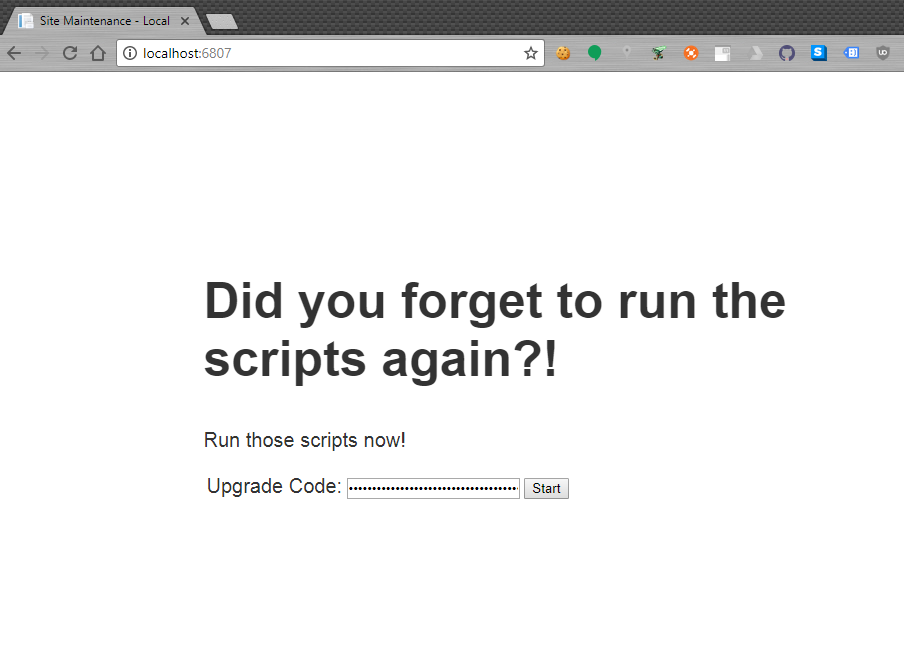

The use case for the automatic process is twofold, but in both cases it is to prevent an unexpected action from taking place. On a developers local machine, if they perform an update of the source code but fail to run the DB migration exe, the website will warn them of the schema mismatches and allow them to migrate the DB via the browser directly.

The developer can now run the upgrade right here, after entering the “secret” upgrade code…

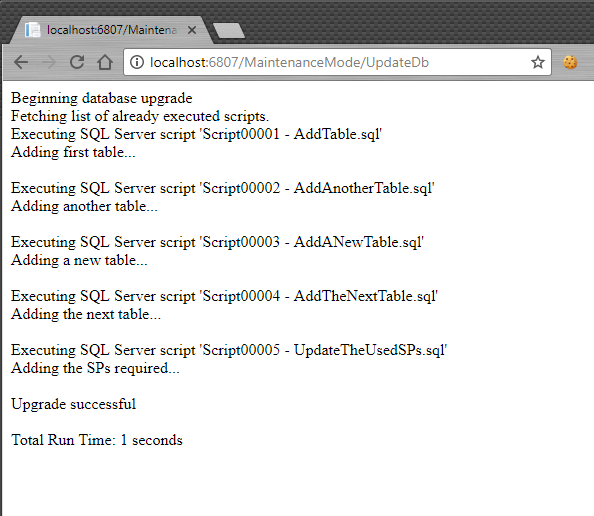

…and see the results in the browser window immediately.

Repeatedly accessing this url once the scripts have been run will have no effect:

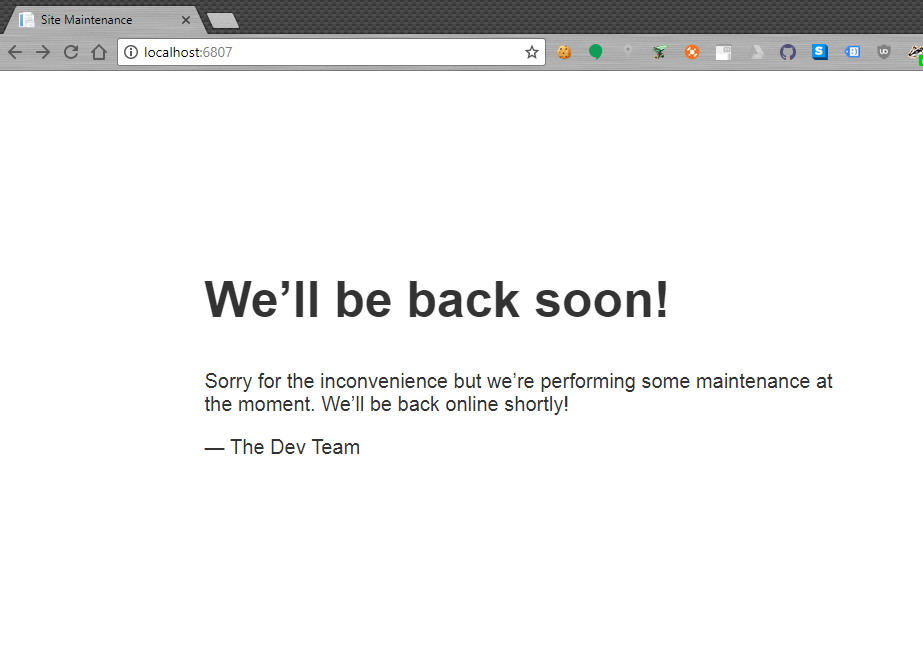

In any another environment (test, QA, prod etc), on web site startup the same process is run, but the view displayed is a generic “We are offline” message for the end user. This is in case of an accidental or unauthorized deployment or an unexpected system change and it is designed to prevent data loss.

Integration into the CI pipeline

On our CI system when we build the project, we follow a build once, deploy many process which means that we only compile and build the project once. As part of the build all the binaries and related dependencies are collated into a single versioned deployment package. The version number of the deployment package is also tagged in the source control system for easy future identification.

As part of the build process for the website the database migrations are automatically applied to the CI database. If the scripts fail, the whole build is failed in the same way if the unit tests fail, then the whole build is failed. Since each check in by a developer triggers a build, we can have a high degree of confidence that any errors in the migration scripts will be found and rectified quickly.

After build, our normal workflow is to automatically deploy the website to the development environment. The database migrations are applied automatically on deployment before the website is spooled up, so on start up it is immediately ready for use. We also conduct some basic smoke tests to ensure the website responds and is available.

This process is followed for each successive environment with all environments using the preceding environment’s deployment package. The CI system keeps a record of what version each environment is currently at and a tagged copy of that deployment package, which further helps dissuade anyone making “adhoc” changes!

Testing these migrations

The real test of any database migration is deploying to production. More specifically to the production database. So lets get a copy shall we? Since our database is small enough we can perform a daily backup and anonymization process, and then use a copy in local test environments, even on our local machines. It means that the developers have access to the data that is less that 24 hours old which makes hunting specific types of bugs and resolving certain issues a lot easier. Also each time that backup is restored locally all the migration scripts are re-tested, so any new data changes that could cause the migration to fail are resolved immediately. These repeated runs give us a high degree of confidence that the database migrations will be successful in production.

Outcomes

With these changes, we have simplified our database management process, increased developer productivity and overall reduced the likelihood of introducing new bugs related to mismatched or missing scripts. A massive value add for our client! :)

I have created a sample application to demonstrate how we have integrated DbUp and it can be found here.